Florian Vogt

Hej! I am a Research Engineer at KTH Royal Institute of Technology in Stockholm, where I work on Reinforcement Learning.

I received my Master's degree from the University of Freiburg. I now want to focus on applying Reinforcement Learning to real-world applications.

I love solving problems that require an effort in both research and engineering. My work mostly focuses on sample and computational efficient RL, scaling it effectively for challenging tasks.

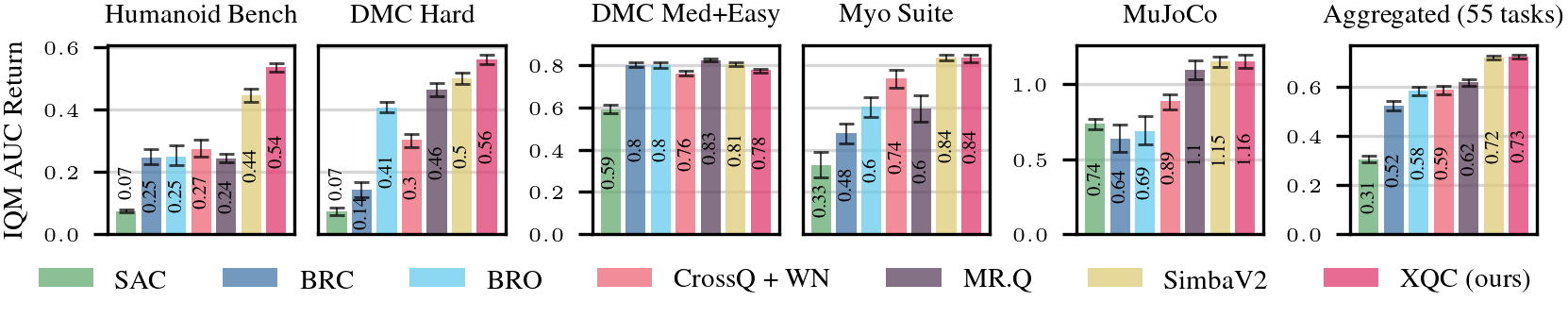

This effort resulted in XQC, a state-of-the-art algorithm in off-policy RL that achieves its performance through surprisingly simple methods.